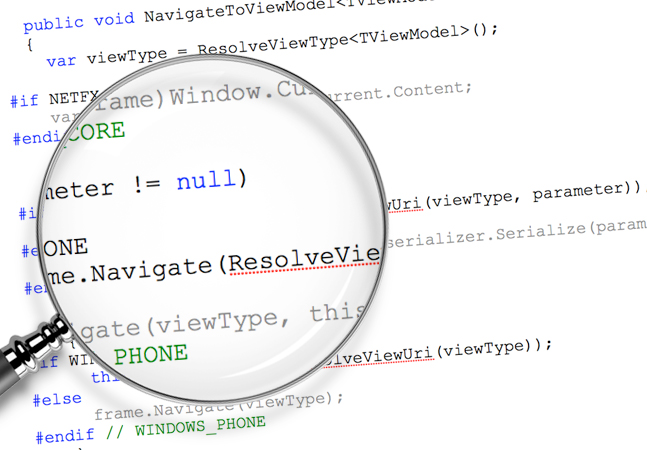

You wouldn't really name a variable "x," would you?

But it happens.

And it's not just "x." It's "usrdb," "item," "foo," "test," "blah" and more. These are real variable names that have been encountered in production-grade codebases.

"And that's just scary!" says Adrienne Braganza-Tacke.

More

Posted by David Ramel on 02/20/20240 comments

Creating AI-powered applications is a natural fit for the Microsoft Azure cloud, but sorting out all the different options for the backing data stores can seem daunting.

All developers are looking for an edge to build applications that are data-driven and harness the power AI, and Azure Databases provide a range of options for secure, scalable and highly available data applications using all the latest languages.

More

Posted by David Ramel on 02/05/20240 comments

Hey, everyone! I'm Mickey Gousset, a staff DevOps architect at GitHub, and I am thrilled to be contributing to the VSLive! blog as a monthly how-to columnist. While every month you can expect tips, tricks and best-practices advice from the development world, for this installment, I thought I'd share some of my predictions for 2024 in the field of software development.

More

Posted by Mickey Gousset on 01/17/20240 comments

Microsoft Fabric was described as "the AI-fication of Microsoft's data business" by RedmondMag writer Joey D'Antoni when it was unveiled at the company's Build 2023 developer conference.

He listed these highlights of the new offering:

More

Posted by David Ramel on 01/15/20240 comments

The consensus is that we are entering a new world following the COVID-19 pandemic, so this might be the time for developers to add to their skillset. The more skills you have the better you are positioned for jobs in the future where multifaceted developers will be in demand.

Companies restarting their business may not have the budget for hiring different developers for different tasks. They may need old-fashioned Jack-or-Jill-of-all-trades. Combining classic application development expertise with Website abilities may be just the ticket for success when the economy begins reopening.

More

Posted by Richard Seeley on 04/22/20200 comments

Continuous Integration and Continuous Deployment (CI/CD) is great in theory but like its Agile and DevOps companions it is not the easiest thing to put into practice.

GitHub Actions, introduced in the past year, are intended to make CI/CD easier for developer teams to implement. If you are struggling to keep your DevOps culture on track and make Agile work, it might be worth seeing if GitHub Actions can help. At least, GitHub and its new Microsoft owners sure hope so. Since Microsoft purchased San Francisco-based GitHub in 2018, some of the Redmond, Washington technology and marketing magic seems to have infused the company.

More

Posted by Richard Seeley on 03/24/20200 comments

We caught up with Jason Bock, a practice lead for Magenic (http://www.magenic.com) and a Microsoft MVP (C#) with more than 20 years of experience working on business applications using a diverse set of frameworks and languages including C#, .NET, and JavaScript.

Bock, the author of ".NET Development Using the Compiler API," "Metaprogramming in .NET," and "Applied .NET Attributes, is leading the full-day “Visual Studio 2019 In-depth” workshop at the upcoming “Visual Studio Live!” conference set for March 30 - April 3 in Austin, Texas.

More

Posted by Richard Seeley on 02/21/20200 comments

Microsoft’s Cosmos isn’t a new database. It’s been around since 2010. But when coupled with Azure, its globally-distributed database in the cloud model seems ideal for business app developers in 2020.

“Cosmos DB enables you to build highly responsive and highly available applications worldwide,” touts Microsoft in its introduction to its NoSQL database. “Cosmos DB transparently replicates your data wherever your users are, so your users can interact with a replica of the data that is closest to them.”

More

Posted by Richard Seeley on 02/13/20200 comments

What's SAFe? Are you doing Scrum? This might sound like dialogue outtakes from an old movie. But it is actually about alternative approaches to application development.

Scrum is sometimes shown in all caps as SCRUM but it is not an acronym. SAFe on the other hand stands for Scaled Agile Framework. Both have official websites: https://www.scrum.org/ and https://www.scaledagileframework.com/.

More

Posted by Richard Seeley on 12/12/20190 comments

Business Intelligence (BI), which like government intelligence sounds faintly like an oxymoron, has been around a long time. The earliest reference to business intelligence appeared in 1865, according to a Wikipedia article. In more modern times, the term started to appear at IBM in the late 1950s but Gartner is quoted as saying BI didn't gain traction in the corporate world until the 1990s. So it appears to be a term coined at the end of the Civil War that then moved into common usage with Decision Support Systems for data-based tactics and strategies, which were developed from 1965 to 1985. Any way you look at it BI is not new technology.

More

Posted by Richard Seeley on 11/20/20190 comments